2025 LA Wildfires: Workforce-Scale Response Lessons

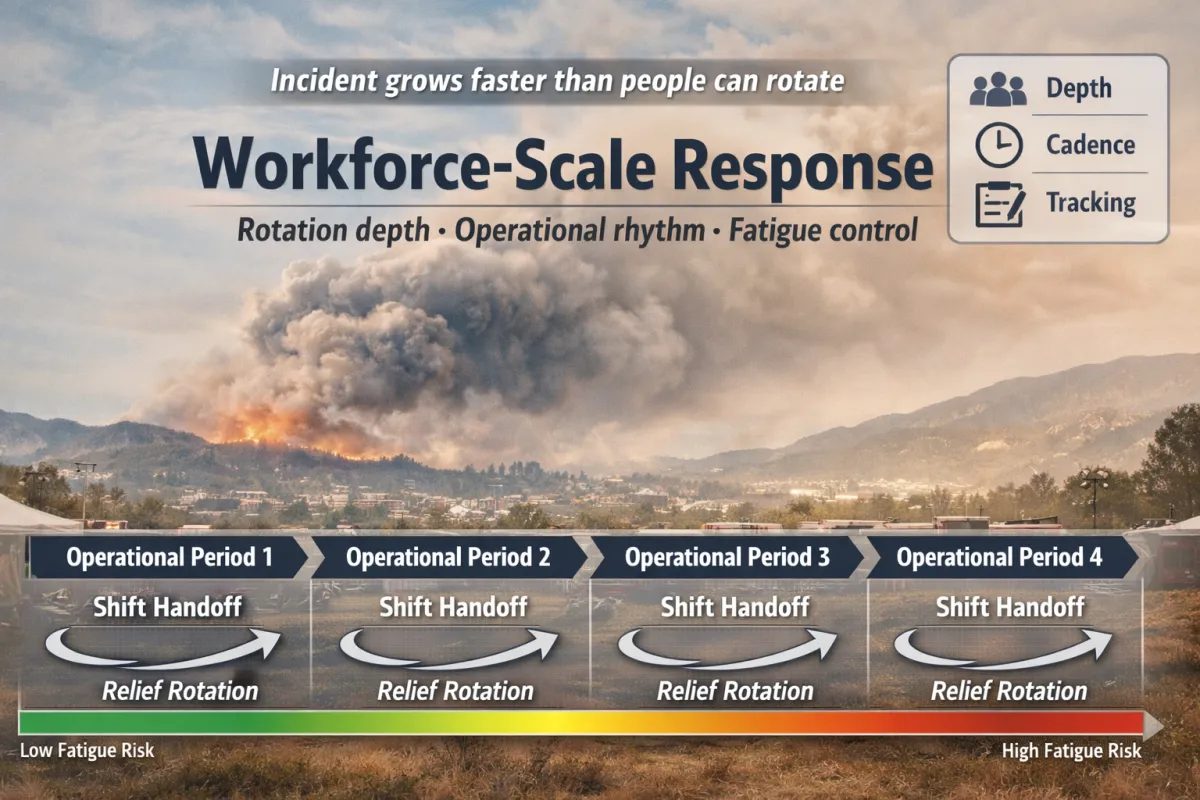

At a certain size, wildfire response stops being a “tactics problem” and becomes a workforce-and-tempo problem. The incident grows faster than your people can rotate.

By Aaron Gilmore — Intergalactic SEM Consultant (humans only so far).

Human-led, Automation-Enhanced. SEM-Artificium

QuickScan

Scale breaks schedules: you can surge people for hours, not weeks—unless rotation depth exists.

Tempo is a control: briefing cadence, shift handoffs, and task tracking decide whether you sustain or spiral.

Two-track ops matters: routine operations must continue while incident operations expand.

Comms load is real work: public info, internal updates, partner coordination, and rumor control consume capacity.

Fatigue is a risk multiplier: tired teams make coordination errors that look like “process issues.”

Event Context (Scope Note)

This article uses the January 2025 Los Angeles County wildfire response period as a case frame. Early figures and details can evolve as after-action products mature; the learning focus here is on repeatable response patterns.

For Who

Primary audience: DoD/Federal supply chain leaders and critical-infrastructure operators (primary); other orgs/practitioners (secondary)

Best for roles: Emergency management, security, operations, facilities, HR/people ops, public affairs/comms, supply chain/logistics leadership, and executive duty officers

What You’ll Get

You will learn: How large incidents become workforce-scale problems—and what “good” looks like for rotation depth, tempo control, and sustained coordination.

You will be able to do: Pressure-test your org’s surge staffing model and build a simple sustained-operations rhythm.

Time & Effort

Read time: ~10 minutes

Do time (optional): 60–90 minutes (run the “rotation depth” self-check and draft a two-track staffing sketch)

Difficulty: Intermediate (leadership + coordination)

Executive Snapshot

What happened: Starting January 7, 2025, multiple wildfires ignited in and around Los Angeles County—including the Eaton and Palisades fires—triggering a large, multi-jurisdiction response and sustained operational periods across agencies and communities (Congressional Research Service [CRS], 2025; CAL FIRE, 2025a; CAL FIRE, 2025b).

Why it matters: When incidents last days to weeks, response success is limited less by “heroics” and more by workforce rotation depth, operational rhythm, coordination capacity, and fatigue controls—especially when the organization still has to run routine operations at the same time (FEMA, 2017; Government Accountability Office [GAO], 2025).

Key lesson: If you can’t staff critical roles repeatedly across multiple operational periods, you don’t have a plan—you have a sprint.

What to do now:

Identify alternates for every critical response/continuity role and validate who can actually rotate into the job for 48–72 hours.

Build a “surge onboarding” checklist (access, comms, briefings, task tracking, demob) so new helpers don’t create new chaos.

Establish a two-track staffing posture (Incident Track vs Routine Track) so you don’t “save the incident” by breaking the business.

Field Notes Opening

At first, it looks like a local emergency: smoke, traffic, school closures, and a shifting evacuation map on everyone’s phone. Then the second-order effects arrive—fast. Security is asked to help with access control and re-entry rules. Operations has to reroute shipments and reprioritize deliveries. HR is trying to locate employees, support displaced families, and figure out what “normal work” even means this week.

And somewhere in the middle of all that, leadership is doing two jobs at once: keeping the enterprise running while building a response posture that can last longer than a day.

Reader Promise: In a few minutes, you’ll understand why large wildfires become workforce-scale response problems—and what “good” looks like for staffing depth, operational rhythm, and sustained coordination.

What We Know (Verified Facts)

Confirmed facts:

Beginning January 7, 2025, ten wildfires ignited in Los Angeles County and adjacent counties; named incidents included the Palisades and Eaton fires among others (CRS, 2025).

CAL FIRE’s incident postings for January 2025 list the Eaton Fire (Altadena/Pasadena area) and the Palisades Fire (Los Angeles County) as major incidents during this period (CAL FIRE, 2025a; CAL FIRE, 2025b).

Los Angeles County published an After-Action Review (AAR) resource page for the Eaton and Palisades fires and later released an independent AAR focused on alert notification systems and evacuation policies (County of Los Angeles, 2025a; County of Los Angeles, 2025b).

CRS notes the event as a multi-incident wildfire period with evolving status updates and federal considerations, reinforcing that large wildfire response often involves multi-level coordination beyond “local only” response (CRS, 2025).

Key actors / organizations involved (if public):

Local: Los Angeles County departments and city partners; public safety agencies; emergency management and public information functions (County of Los Angeles, 2025a).

State/Federal context: state wildfire coordination frameworks and federal considerations as described by CRS; disaster-assistance support mechanisms in major incidents (CRS, 2025).

Impacted assets / operations (if known):

Community impact included evacuations and extended recovery needs; County recovery communications and monitoring products were published following the January wildfire period (County of Los Angeles, 2025c).

What We Don’t Know Yet (Unverified / Evolving)

Open questions / uncertain details:

Final validated totals (losses, long-term health impacts, total economic impact) can change as investigations, claims, and assessments mature.

Workforce-specific performance findings (e.g., where staffing depth most constrained response, how mutual-aid staffing was managed across operational periods) may not be fully visible without comprehensive AARs focused on staffing/tempo.

The extent to which private-sector staffing disruptions (employee displacement, commute impacts, supply chain reroutes, and facility access controls) cascaded across different industries is uneven and often underreported.

Assumptions used in this article: This article uses the incident as a case frame to teach durable patterns: sustained operations require rotation depth, operational rhythm, and coordination capacity. It does not claim that every organization experienced the same disruption level.

Timeline

January 7, 2025 — Multiple fires ignite in and around Los Angeles County, including the Eaton and Palisades fires (CRS, 2025; CAL FIRE, 2025a; CAL FIRE, 2025b).

January 16, 2025 — CRS posts a status snapshot (time-stamped) as the incident set evolves, emphasizing ongoing impacts and considerations (CRS, 2025).

February 7, 2025 — Los Angeles County announces selection of an independent after-action review effort focused on evacuations and alerting systems (County of Los Angeles, 2025d).

September 25, 2025 — Los Angeles County releases an independent AAR on alert notification systems and evacuation policies for the Eaton and Palisades fires (County of Los Angeles, 2025b).

Why This Matters (So What?)

Operational impact: Large incidents don’t just “add more work”—they multiply the kinds of work: situational awareness, staffing and relief scheduling, logistics, public information, re-entry/access control, partner coordination, and continuity of routine services. The enterprise-level challenge is sustaining that load across operational periods without burning out the same small group of responders.

Risk / threat implications: From a security-and-resilience perspective, workforce strain is a risk amplifier. Fatigued teams make avoidable mistakes (handoff failures, missed approvals, inconsistent messages, incomplete logs) that can create safety issues, liability exposure, and reputational damage. GAO has also highlighted workforce readiness challenges across federal disaster response missions, especially when disasters overlap and persist—an echo of the same constraint private organizations face: people capacity (GAO, 2025).

Governance / compliance implications (optional): If your program “exists on paper” but cannot be staffed, exercised, and sustained, it will fail in the moment you most need it. NIMS/ICS doctrine emphasizes operational rhythm, role clarity, span of control, and planned transitions (FEMA, 2017).

Who should care most (roles/stakeholders): Executives/COO, EM/continuity leads, security/facilities, HR/people ops, public affairs/comms, supply chain/logistics leadership, and IT/collaboration admins.

SEM Doctrine Translation

Doctrine focus: Sustained operations through operational periods (operational rhythm + handoffs); resource management and staffing depth (rotation + credentialing/access) (FEMA, 2017).

Plain-English explanation: Wildfire response at this scale teaches a hard truth: the limiting factor is often not intent, equipment, or even initial speed—it’s sustained staffing. NIMS/ICS is designed around repeating cycles (“operational periods”) because incidents outlast single meetings, single leaders, and single shifts. That cycle—plan, brief, execute, transition—only works when roles can be staffed repeatedly with clean handoffs, documented decisions, and fatigue controls (FEMA, 2017).

For private organizations, the equivalent doctrine is “two-track operations.” You must staff the Incident Track (response cell/EOC functions, coordination, comms, access control, partner liaison) while also staffing the Routine Track (minimum viable operations to keep the mission running). If you staff the incident by gutting operations, operations fail; if you ignore the incident to keep operations running, the incident spreads into the business anyway.

This is why “surge staffing” must be treated as a system: rosters, onboarding, access/credentialing, just-in-time training, collaboration tooling, and demobilization. A continuity program that cannot surge and rotate is not continuity—it’s documentation.

Controls / practices that apply

Define operational period rhythm for your org (e.g., 12-hour or daily cadence): briefing times, situation reports, handoff format, and decision log owner.

Assign alternates for every critical role and define “relief triggers” (hours-on-duty thresholds, error rate indicators, task backlog thresholds).

Establish two-track staffing (Incident vs Routine) with a named decision authority to split tracks.

Pre-stage access/credentialing for surge staff (facility entry, system accounts, role-based permissions, badging).

Standardize comms: one executive briefing channel, one ops channel, one public/partner messaging path, and one incident log.

Pre-negotiate vendor surge support (security guard force, transportation, lodging, generators, debris, IT collaboration) so capacity expands on Day 2—not Day 12.

Scope boundaries (what this incident does NOT prove): This incident does not “prove” one agency’s performance, one technology’s superiority, or a single causal factor for outcomes. It does demonstrate repeatable operational patterns: sustained incidents stress staffing depth, coordination capacity, and communications rhythm, and those stresses must be designed for—not improvised.

Figure 1 - "Surge Staffing = People + Process + Rhythm" [Aaron Gilmore] {Diagram showing surge staffing requires people capacity, defined process, and an operational rhythm.}

Figure 2 - Two-Track Operations Model (Incident Track vs Routine Track) [Aaron Gilmore] {Split model showing dedicated incident roles while maintaining minimum viable routine operations}

Lessons Learned (What this incident teaches)

Lesson 1: Depth beats heroics.

If your plan depends on the same small group working extreme hours, you don’t have resilience—you have exhaustion. Define alternates for every critical role and prove they can rotate in with the needed access and authority.

Lesson 2: Surge is a system, not a group text.

Surge works when onboarding, access, comms, task tracking, and demob are pre-built. Without that system, “more people” increases coordination friction and slows the response.

Lesson 3: Coordination and communication become the first bottleneck.

As incidents expand, demand for accurate updates, partner coordination, and evacuation/operational messaging rises sharply. County after-action work on alerts/evacuations highlights how complex and consequential that communication layer becomes (County of Los Angeles, 2025a; County of Los Angeles, 2025b).

Role-Based Implications (Who should do what)

Leadership / Executives:

Approve a two-track staffing model (Incident vs Routine) and define triggers to activate it.

Fund rotation depth: alternates, cross-training, and a surge onboarding system (not just a written plan).

Set decision thresholds: when to reduce services, pause nonessential work, or change priorities to preserve life safety.

Security (physical/corporate) / Program Management

Pre-identify access control points and re-entry rules that are staffable (who checks, who approves, how documented).

Build a relief cadre for perimeter, credential checks, and controlled re-entry operations.

Pre-stage vendor options (guard services, traffic control, temporary fencing, badging support) for rapid expansion.

Emergency Management / Resilience / Continuity

Define operational period rhythm for your org: briefing cadence, SITREP format, handoff process, and demobilization triggers.

Run a short functional drill on “Day 2 operations” (handoffs + fatigue + continuity), not just “Day 1 response.”

Maintain a resource-tracking method that works under load (roles, assignments, time-on-duty, and task backlog).

IT/Cyber / Systems Security (if applicable)

Validate emergency comms stack under surge conditions (mobile-first access, MFA/account recovery, priority notifications, redundancy).

Establish an incident-log tool and permissions model that supports rapid onboarding without overexposing data.

HR / Workforce / Insider Risk (if applicable)

Build an employee accountability + assistance playbook: check-ins, lodging guidance, pay/leave rules, and EAP coordination.

Create policies for “disrupted workforce reality” (displacement, childcare/school closures, commute disruption, remote work exceptions).

Legal / Compliance / Contracts / Supply Chain (if applicable)

Pre-negotiate surge contracts for critical vendors and define emergency procurement thresholds. - Review liability and documentation expectations for access/re-entry decisions and incident communications.

Facilities / Operations

Define “minimum viable operations” for facilities and logistics when staffing drops or access is restricted.

Pre-plan alternate routes, alternate suppliers, and staging options for region-specific disruption.

What To Do Now (Field Application)

Immediate Actions (24–72 hours)

Action 1: Name alternates for every critical response/continuity role (and confirm they can actually step in).

Action 2: Create a one-page surge roster (name, role, phone, activation method, access needs).

Action 3 (optional): Establish your operational period rhythm (briefing time, handoff time, executive update time, and an incident log owner).

Evidence to capture (what to document/log):

Role roster with alternates + time-on-duty tracking plan.

Surge onboarding checklist (access, comms, brief, logging, demob).

A sample SITREP + decision log template that can be used in the next incident.

“Done” criteria: You can run two shifts (or two operational periods) with clean handoffs, without relying on the same 2–3 people to hold every critical function.

Short-Term Actions (This week)

Action 1: Run a tabletop: “Two-track staffing for 72 hours” (incident expands while routine obligations continue).

Action 2 (optional): Conduct a mini functional drill: simulate a shift change and measure handoff quality.

Action 3 (optional): Identify the top 5 surge vendor needs and confirm whether contracts and contacts are ready.

Note from the Author

I’m less interested in whether we can write a perfect plan than whether we can staff reality. Wildfires are a fast lesson in a slow truth: resilience is often limited by people capacity and sustained coordination—not by the quality of the binder. And as you can clearly see, blaming this fire on personality traits of leadership had noting to do with how this response played out. Also, they charged multiple people for arson, so blaming a fire chief because of reasons not related to being properly staffed (something they must get higher approvals for and budgets to cover, aka above their paygrade) is not conducive to protecting neither people nor property from fires.

Reference List

CAL FIRE. (2025a). Eaton Fire. California Department of Forestry and Fire Protection. https://www.fire.ca.gov/incidents/2025/1/7/eaton-fire/

CAL FIRE. (2025b). Palisades Fire. California Department of Forestry and Fire Protection. https://www.fire.ca.gov/incidents/2025/1/7/palisades-fire/

Congressional Research Service. (2025). January 2025 Los Angeles County wildfires (IF12871; Version 2). https://www.congress.gov/crs-product/IF12871

County of Los Angeles. (2025a). Eaton & Palisades Fires after-action reviews. https://lacounty.gov/aar/

County of Los Angeles. (2025b, September 25). LA County releases after-action review of alert notification systems and evacuation policies for the Eaton and Palisades fires. https://lacounty.gov/2025/09/25/la-county-releases-after-action-review-of-alert-notification-systems-and-evacuation-policies-for-the-eaton-and-palisades-fires/

County of Los Angeles. (2025c, April 16). New interactive dashboard tracks environmental and health monitoring following January wildfires. https://lacounty.gov/2025/04/16/new-interactive-dashboard-tracks-environmental-and-health-monitoring-following-january-wildfires/

Federal Emergency Management Agency. (2017). National Incident Management System (3rd ed.). U.S. Department of Homeland Security. https://www.fema.gov/sites/default/files/2020-07/fema_nims_doctrine-2017.pdf

Government Accountability Office. (2025). Disaster assistance high-risk series: Federal response workforce readiness (GAO-25-108598). https://www.gao.gov/products/gao-25-108598

National Fire Protection Association. (2022). NFPA 1600: Standard on continuity, emergency, and crisis management. https://www.nfpa.org/codes-and-standards/all-codes-and-standards/list-of-codes-and-standards/detail?code=1600